Prompt for AI Code Assistants

Adopt AI code assistants like GitHub Copilot and Cursor while safeguarding secrets, scanning for vulnerabilities, and maintaining developer efficiency

Accelerate the secure adoption of AI code assistants

Secrets and PII Protection

Instantly redact and sanitize code to prevent the exfiltration of secrets, PII and IP when using AI code assistants.

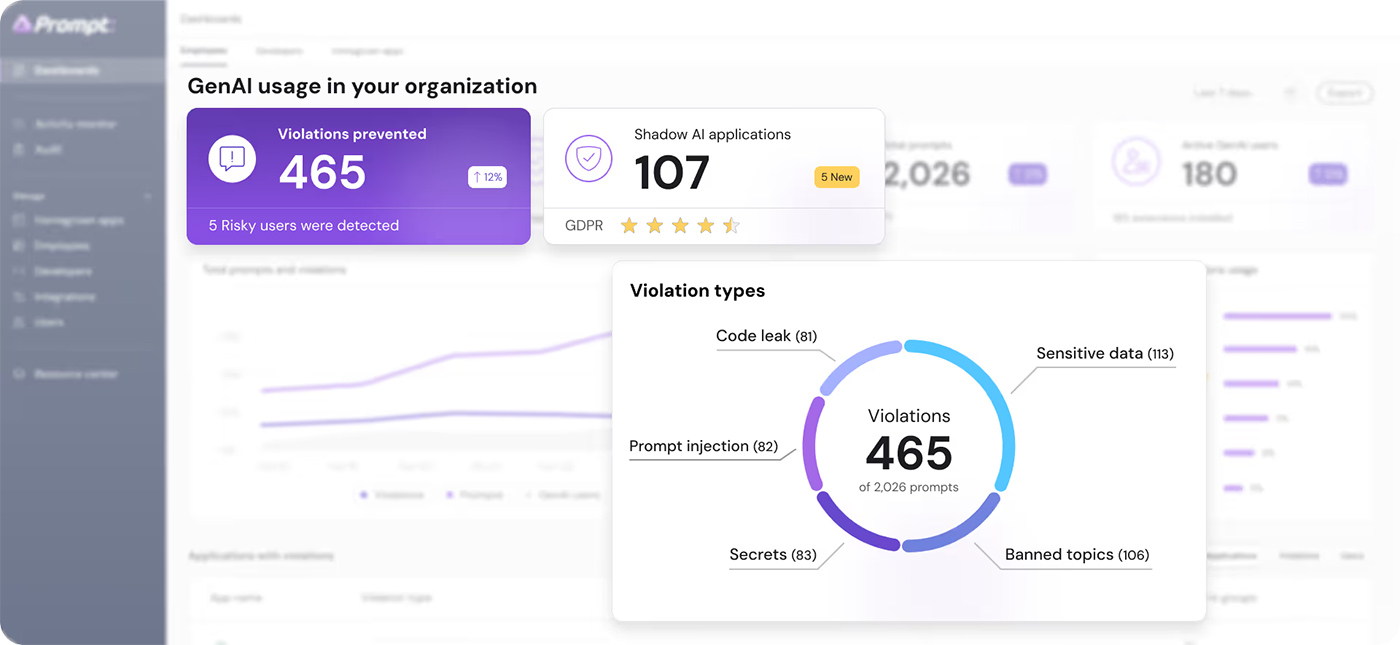

Full visibility and governance

Gain visibility into AI usage across development cycles and detect potential privacy violations with robust observability.

Any tool, any programming language

Prompt Security integrates with thousands of web-based AI tools and dozens of AI code assistants, supporting nearly 30 programming languages.

How it works

End-to-End Security and Visibility

Time to see for yourself

See how organizations are securely enabling AI with

Prompt Security